for Autonomous Driving

Introduction (~1.5min)

Most real-world 3D sensors such as LiDARs perform fixed scans of the entire environment, while being decoupled from the recognition system that processes the sensor data. In this work, we propose a method for 3D object recognition using programmable light curtains, a resource-efficient controllable sensor that measures depth at user-specified locations in the environment. Crucially, we propose using prediction uncertainty of a deep learning based 3D point cloud detector to guide active perception. Given a neural network's uncertainty, we derive an optimization objective to place light curtains using the principle of maximizing information gain. Then, we develop a novel and efficient optimization algorithm to maximize this objective by encoding the physical constraints of the device into a constraint graph and optimizing with dynamic programming. We show how a 3D detector can be trained to detect objects in a scene by sequentially placing uncertainty-guided light curtains to successively improve detection accuracy.

Long Talk (~10min)

What is a Light Curtain?

A programmable light curtain is a recently-invented controllable sensor that can measure the depth of any user-specified 2D vertical surface in the environment. It is relatively inexpensive and of much higher resolution compared to LiDAR. To learn more about light curtains, please look at our light curtain website that contains an overview of light curtains, explains how they work, and lists our other work on light curtains. In this work, we use light curtains for active detection in autonomous driving.

Active Detection Pipeline

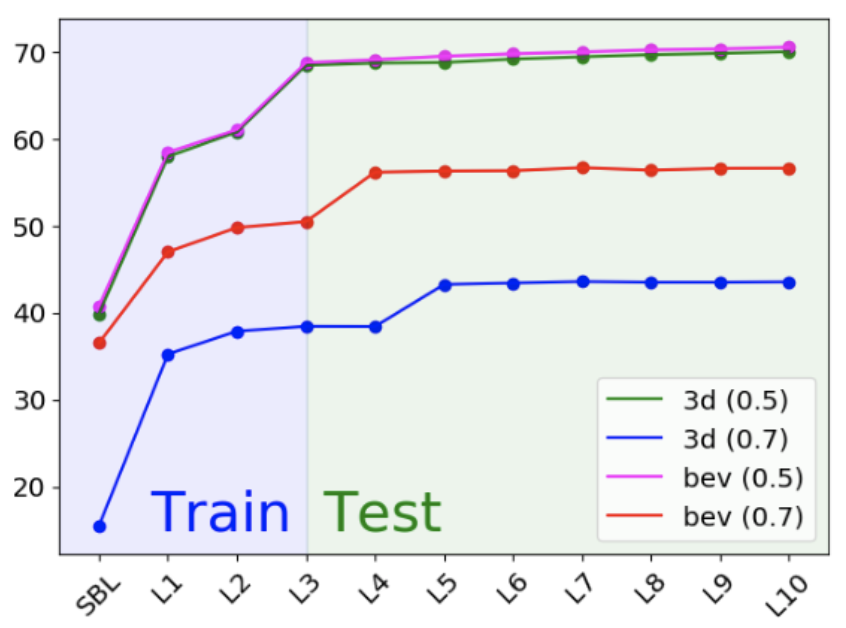

The points from a single-beam LiDAR are used to initialize a 3D object detector. The detector produces rough initial detections, as well as an uncertainty map. The network’s uncertainty is used to decide where to place the light curtain. The points sensed by this light curtain are input back into detector to update the detections and the uncertainty map. This process can be repeated multiple times in a loop to progressive sense the scene and improve detection accuracy.

Light Curtain Optimization

Constraint graph: The physical constraints of the light curtain are encoded into a graph. Candidate control points on each ray constitute the nodes of the graph. An edge exists between two nodes on consecutive rays if the laser is able to successively illuminate the two points while staying within its velocity limits.

Dynamic programming: An uncertainty value is assigned to each node from the detector's uncertainty map. Then, dynamic programming optimization is used to find the feasible light curtain that maximizes the sum of uncertainties along its nodes. Placing this curtain in the environment provides the most amount of information to the detector.

Dynamic programming: An uncertainty value is assigned to each node from the detector's uncertainty map. Then, dynamic programming optimization is used to find the feasible light curtain that maximizes the sum of uncertainties along its nodes. Placing this curtain in the environment provides the most amount of information to the detector.

Qualitative Results

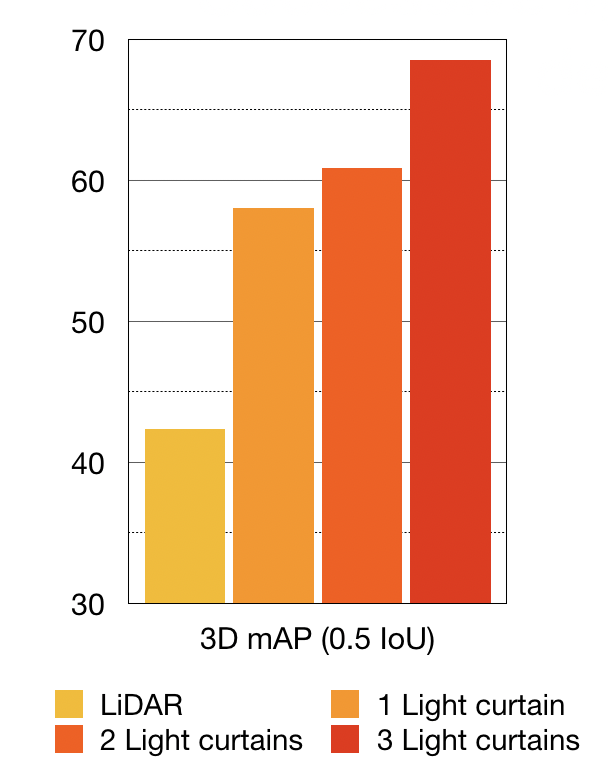

Quantitative Results

Source Code

We have released our implementation and source code on Github. Try our code!

[L.Slides] [S.Slides] |

Citation |

@InProceedings{Ancha_2020_ECCV,

author="Ancha, Siddharth

and Raaj, Yaadhav

and Hu, Peiyun

and Narasimhan, Srinivasa G.

and Held, David",

editor="Vedaldi, Andrea

and Bischof, Horst

and Brox, Thomas

and Frahm, Jan-Michael",

title="Active Perception Using Light Curtains for Autonomous Driving",

booktitle="Computer Vision -- ECCV 2020",

year="2020",

publisher="Springer International Publishing",

address="Cham",

pages="751--766",

isbn="978-3-030-58558-7"

}

|

AcknowledgementsDisclaimer: Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation, United States Air Force and DARPA. |